2026-04-29

When Your Labels Lie: What a Neuroscience PhD Taught Me About Healthcare AI

A counterintuitive lesson from a neuroscience PhD

I plateaued at 73% accuracy for months on a Sharp Wave Ripple classifier — those hippocampal oscillations tied to memory consolidation.

Changed the architecture. Scaled the data. Tuned the hyperparameters. Nothing moved.

The real problem wasn't the model. It was the labels.

When biology bleeds across the label window

My labels were temporal: SWRs recorded before learning versus after. The catch is that the protocol ran across consecutive days, and memory consolidation doesn't stop at the end of a session.

Day J's "before" SWRs still carried the traces of the previous days' learning. The result: 12 to 13% of the signals were structurally mislabeled.

Not an annotation error. A biological bleed across the label's time window.

Self-supervised: 73% to 84%

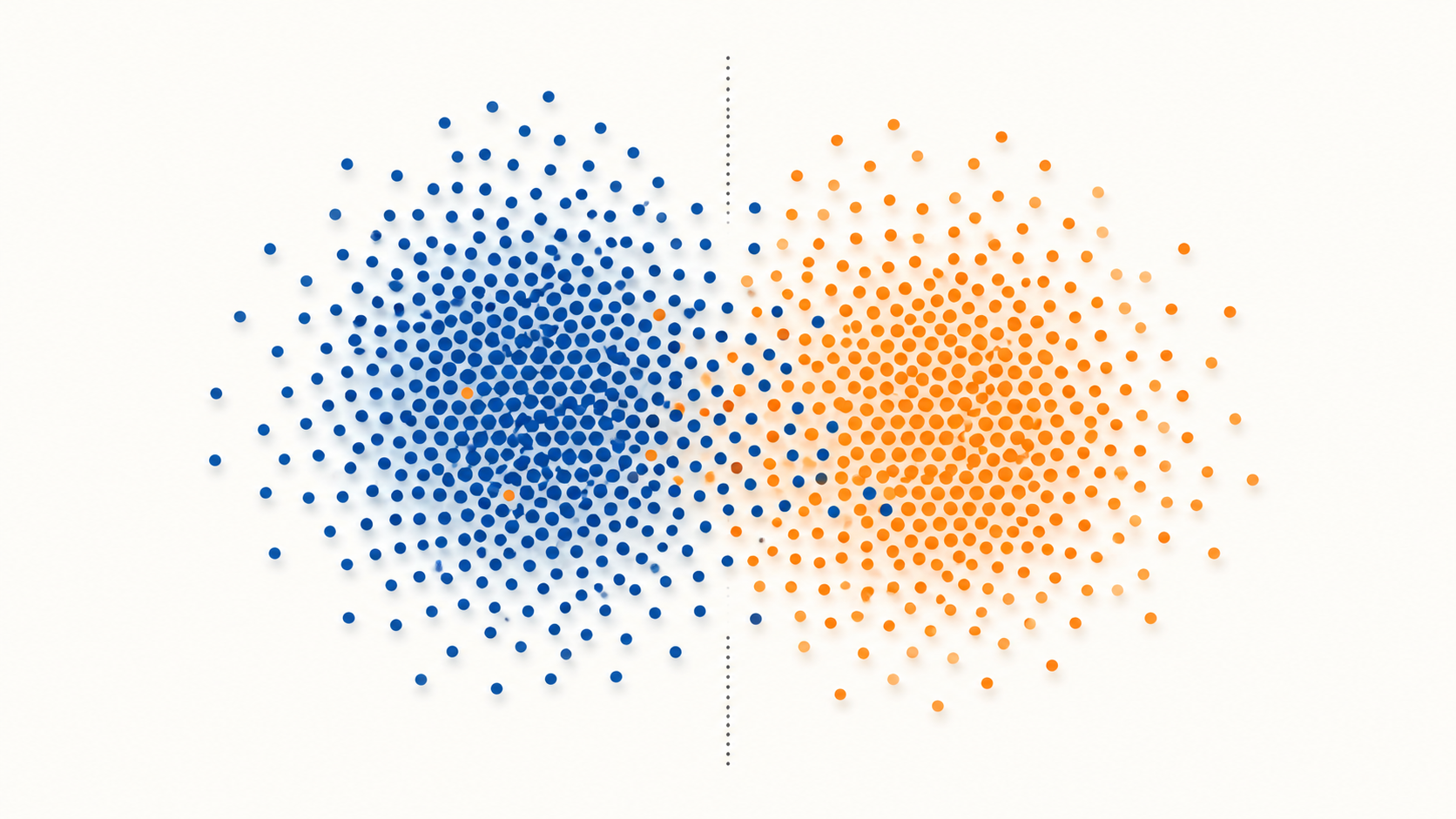

I built a self-supervised pipeline that re-grouped the dataset according to the intrinsic structure of the signal, independent of the temporal label.

Retrained on the same architecture, the same data volume: 73% to 84%.

Why this is everywhere in healthcare AI

The mechanism reaches well beyond neuroscience. It's structural the moment you work on living systems:

- Overlapping disease phases — an "early-stage" patient already carries the biological signature of the next stage

- Treatment effects that persist — an "off-treatment" patient keeps altered biomarkers for weeks

- Comorbidities contaminating control cohorts — a "healthy" subject often carries an undiagnosed subclinical condition

- Administrative clinical stratifications — that don't always reflect underlying biology

Optimizing a model against those labels without questioning their structure means validating an administrative boundary with an elegant metric.

Three questions to ask before optimizing

1. What do your labels actually measure?

Is a "before/after", "sick/healthy", "responder/non-responder" label biologically clean, or is it the result of a temporal, clinical, or administrative convention? The answer changes what you're allowed to optimize.

2. What does the intrinsic structure of the signal say?

Before training a supervised classifier, run unsupervised clustering on the raw features. If the natural clusters don't align with the labels, the model will learn the less-biological of the two boundaries.

3. What's your acceptable label-noise rate?

10 to 15% of mislabeled samples can be enough to cap a model well below its real capacity. A label-noise audit should come before hyperparameter tuning, not after.

The metric that lies vs the one that counts

A stable accuracy on a test set built with the same biased labels as the training set validates nothing. It confirms that the model reproduces the bias.

Real validation goes through intrinsic features, subgroups defined independently of the label, or a self-supervised re-clustering acting as a second eye.

If you're running a healthcare AI project where the model is plateauing without obvious reason — or a pipeline where you suspect the labels are lying — let's take 30 minutes to talk it through.